Run Samba in clustered mode with Ceph

Step 2: Preparing for Samba

The next step is to configure Samba so that it uses CTDB and accesses CephFS. (Operating Samba on the Ceph cluster nodes is a tempting proposal, but the Ceph developers strongly recommend you avoid the potential loopback problems that could result from enabling a CephFS filesystem mount on a host that is part of the Ceph cluster itself.)

Samba will run on separate hosts and access CephFS remotely. The other servers in this configuration answer to the names of Daisy and Eric.

You first need a CephFS mount on the Samba systems. Ceph relies on the built-in authentication mechanism, CephX, which ceph-deploy also enables. For the mount to work, you need the password of an active CephX user. In this article, I assume that access relies on the rights of the admin user admin. The Ceph documentation explains the essentials of user management [3].

The password of the admin user is found on the master server in /etc/ceph/ceph.client.admin.keyring; it is the entry that follows behind key =: in this example, AQCj2YpRiAe6CxAA7/ETt7Hcl9IyxyYciVs47w==. This key belongs in a separate file with a freely selectable name, such as /etc/ceph/admin.secret. Now you can mount CephFS using /mnt/samba:

sudo mount -t ceph IP_address:6789:/ /mnt/samba -o name=admin,secretfile=/etc/ceph/admin.secret

The IP address should be the IP address of a MON server, such as the local IP address of Alice. You can also add the mount entry to your /etc/fstab file:

IPaddress:6789:/ /mnt/samba ceph name=admin,secretfile=/etc/ceph/admin.secret,noatime 0 2

After you reboot the system, CephFS is immediately available under /mnt/samba. The entry and the keyfile should be present on all hosts that want to mount a CephFS filesystem.

Step 3: Using CTDB

To make CTDB available, you must enable cluster mode explicitly when compiling Samba. All current distributions come with cluster-capable Samba in a sufficiently recent version – CTDB requires version 4.2 or newer of Samba.

At least four parameters must exist in your smb.conf for CTDB to work:

netbios name=<entry>clustering=yesidmap config * : backend=autorididmap config * : range = 1000000-1999999

You also need to install the separate ctdb package, which contains all the programs related to CTDB.

In addition, you need several CTDB-specific configuration files that you have to adapt to local conditions. Some required values are:

CTDB_NODES, which points to a file that lists all participating nodes of the Samba cluster. The default is/etc/ctdb/nodes; the program expects the IP address of one of the cluster nodes in a line of the file.CTDB_RECOVERY_LOCK, which points to a file that CTDB expects in the shared storage; in this example,/mnt/samba/lock.CTDB_PUBLIC_ADRESSES, which is a bit complicated: CTDB expects a file containing a list of all network interfaces of each node together with the associated IPs. The syntax of the file isIP/netmask <network_interface>. For the example with Daisy and Eric, the file might look like:

10.42.0.1/24 eth0 10.42.0.2/24 eth0

CTDB_PUBLIC_ADRESSES clarifies the fact that CTDB is a lightweight cluster manager: CTDB needs the details of the IP addresses to be able to activate its IP address on a different Samba node after the failure of one node.

If the host to which an IP address from CTDB_PUBLIC_ADRESSES is assigned fails at any time, CTDB automatically ensures that the IP is enabled elsewhere and thus also ensures that the CIFS clients continue to receive responses to requests. The IP addresses from CTDB_PUBLIC_ADRESSES also need to be entered in DNS so that name resolution works.

After these steps, Samba is ready to go: In addition to the well-known services smbd, nmbd, and winbind, the ctdb service should be running also. The next step is to run the command that shows whether the CTDB setup worked:

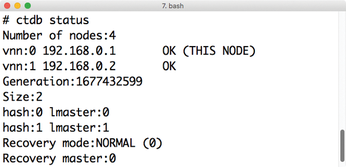

ctdb status

Multiple nodes should show up, and the cluster should have a status of NORMAL (Figure 4). Then, each of the CTDB nodes can act as a single Samba server.

In the background, Samba stores data to the cluster. A built-in health check,

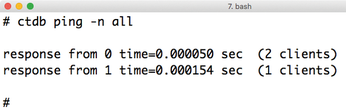

ctdb ping

pings all the other CTDB nodes from the current node and displays the response times (Figure 5).

Infos

- Ceph Jewel for Ubuntu 16.04: http://download.ceph.com/debian-jewel/dists/xenial/main/binary-amd64/

- vfs_ceph for Samba: http://manpages.ubuntu.com/manpages/xenial/man8/vfs_ceph.8.html

- CephX management: http://docs.ceph.com/docs/hammer/rados/operations/user-management/

« Previous 1 2 3

Buy this article as PDF

(incl. VAT)