Setting up a file server cluster with Samba and CTDB

Close Ranks

© Alison Bowden, 123RF

Samba Version 3.3 and the CTDB lock manager provide full cluster support.

The Open Source Samba [1] system has provided file and print services for Windows and Unix-style computers since 1992. The Samba developers [2] always had difficulties emulating Windows server characteristics without the specifications, but thanks to Microsoft finally releasing the server protocol specifications late in 2007 [3], the task is now easier.

A recent add-on tool dubbed CTDB [4] now provides Samba with a feature that even Windows does not support: clustered file servers. Samba now offers the option of a distributed filesystem with multiple nodes that looks like a single, consistent, high-performance file server. And this cluster-based file server system is (more or less) infinitely scalable with respect to the number of nodes. (Windows 2003 does have some support for clustering, but it is designed with web and database servers in mind and restricted to eight nodes.)

In this article, I describe some of the the problems Samba solves with clustering, and I take a look at the history and design of the CTDB add-on at the center of Samba's clustering support. In addition, you'll get some hints on how to configure CTDB and set up your own Samba cluster.

CTDB the Manager

Although CTDB was originally developed for Samba clustering, it has subsequently evolved into a management solution for a collection of other services, such as NFS, the vsftpd FTP daemon, and Apache. CTDB manages these services by starting and stopping programs and by monitoring programs at run time. Additionally, CTDB takes the necessary actions to support IP address switching.

The CTDB_MANAGES_service configuration parameters in /etc/sysconfig/ctdb specify whether or not CTDB manages a service; these parameters can be set to yes or no. Thus far, the following parameters exist:

- CTDB_MANAGES_SAMBA

- CTDB_MANAGES_WINBIND

- CTDB_NAMAGES_NFS

- CTDB_MANAGES_VSFTPD

- CTDB_MANAGES_HTTPD

You need to remove the start scripts for the CTDB-managed services from the runlevels, for example, using chkconfig -s smb off. Incidentally, CTDB_MANAGES_SAMBA has nothing to do with cluster-wide handling of the TDB files. CTDB does this independently of its service management functionality. Service monitoring is performed by event scripts in /etc/ctdb/events.d/ called by the CTDB daemon. Thus, to put a new service in the capable hands of CTDB, you just need to write a new event script.

The Problem

The Samba developers had to solve a few intrinsic problems in order to serve up the same file at the same time to multiple client nodes attached to a filesystem cluster. First of all, the Common Internet Filesystem (CIFS) protocol used with Samba and Microsoft file service systems requires sophisticated locking mechanisms, including share modes that exclusively lock whole files and byte-range locks to lock parts of files. These mandatory locks in Windows are just not convertible to the advisory locks used in the Posix landscape [5]. To work around this issue, Samba must store CIFS locking information in an internal database and check the database on file access.

Also, the various Samba processes must exchange messages. For example, a client can send a lock request with a timeout for a file area currently locked by another client. If one client lifts the lock within the timeout, Samba grants a new lock and sends a signal to tell the waiting target process that a message is available. The system must also synchronize ID mapping tables, which map Windows users and groups to Unix user and group IDs.

The clustering problem also adds other complications. For instance, as a member server in the domain, Samba needs to have the same join information on all nodes; that is, it needs the computer account password and the domain SID. In addition, the active SMB client connections and sessions on the nodes must be known across all nodes.

Samba's Trivial Database (TDB) [6] is a small, fast database similar to Berkeley DB and GNU DBM. TDB supports locking and thus simultaneous writing. Samba uses TDB internally in a vast number of places, including caching and other data manipulation tasks. Samba even uses the mmap() memory mapping mechanism to map TDB areas directly into main memory, which means that TDBs can act as fast shared memory.

As a first step in managing the challenge of a clustered file service, the Samba developers extended the TDB database to improve support for clustering scenarios. Cluster TDB (CTDB) made its debut in spring 2007; the CTDB Samba connection was originally only available in the form of the customized 3.0.25-ctdb version. The cluster code found its way into the default Samba distribution with version 3.2.0 in July 2008, but this initial effort was not complete. With Samba 3.3.0, which was released in January of this year, Samba now has full clustering support.

Ronnie Sahlberg is now the CTDB project maintainer. His CTDB Git branch [7] is the crystallization point for the official CTDB code. More recent CTDB versions support persistent TDBs and database transactions at the API level, making CTDB usable for any TDB-related tasks. Additionally, the developers have extended CTDB by adding a plethora of monitoring and high-availability features. The full CTDB history is on the Samba wiki [8].

How CTDB Works

Samba runs on all the nodes, and all the Samba instances appear as a single Samba server from the client's viewpoint. The Samba instances are configured identically and serve up the same file areas from the shared filesystem as shares. Thus, the CTDB model is basically a load-balancing cluster with high-availability functionality.

Behind the scenes, the CTDB daemon, ctdbd, runs on every node. The daemons negotiate the cluster TDB database metadata. Each CTDB daemon has a local copy (LTDB) of the TDB database that it maintains for CTDB; this copy does not reside on the cluster filesystem, but in fast local memory. Access to the data is handled by the local TDBs.

Samba uses TDB databases for various purposes. The database for locking, messaging, connections, and sessions only contains volatile data, but it is data that Samba reads and writes frequently. Other databases contain non-volatile information. Samba does not need write access to this persistent data very often, but read access is all the more critical. The data integrity requirements are thus stricter for the persistent databases than for the volatile ones. On the other hand, performance is more critical for volatile databases.

Samba uses two completely different approaches for managing volatile and persistent databases: In the case of the persistent databases, each node always has a complete and up-to-date copy. Read access is local. If a node wants to write, it locks the whole database in the scope of a transaction and completes its read and write operations within this transaction. Committing the transaction distributes all the changes to all other CTDB nodes.

For volatile data, on the other hand, each node just keeps the records it has already accessed in its local storage. This means that only one node, the data master, has the current data for a record. If a node wants to write or read a record, it first checks to see if it is the data master for the record and, if so, it accesses the LTDB directly. If not, it first picks up the current record data from the ctdbd, assumes the data master role, and then writes locally.

Because the data master always writes directly to the local TDBs, a single CTDB node is not slower than an unclustered Samba. The secret behind CTDB's excellent scalability is that the record data is only sent to a single node, instead of all nodes, for the volatile databases. After all, it's perfectly okay to lose the changes that one node makes to a volatile database if the node leaves the cluster. The information only relates to client connections on the node that has failed. The other nodes can't do anything with this data.

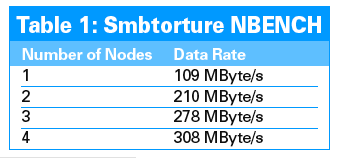

Performance tests on a cluster [9] confirm that the design is solid. An Smbtorture NBENCH test running on 32 clients is shown in Table 1. A single connection to a cluster node share achieves a transfer rate of 1.7GBps.

Self-Repairing

If a node fails, the volatile database is likely to lose its data master for a couple of records. The recovery process restores a consistent database status: One node is the recovery master that collects the records from all the other nodes. If it finds a record without a data master, it looks for the node with the newest copy. To allow this to happen, CTDB maintains a record sequence number in an extra header field, compared with the standard TDB; the number is incremented whenever the record is transferred to another node. At the end of the recovery process, the recovery master is the data master for every record in every TDB.

The recovery master is chosen by an election process, which uses what is known as a recovery lock. This recovery lock feature requires CTDB to support Posix fcntl() locks. Other, more complex election processes could potentially remove this requirement, but on the other hand, an intact cluster filesystem solves the error-prone split brain problem in CTDB.

Tests

If you run CTDB, you need a clustering filesystem that is mounted on all nodes, supports Posix fcntl() locking semantics, and guarantees consistent locking across all nodes. Which filesystem this is, or whether the storage capacity is provided by a SAN using Fibre Channel from a storage node attached via I-SCSI, or even from local disk partitions, is not important. CTDB is happy as long as it can mount the same filesystem on all nodes and can apply byte range locks to it. As a minimal case, a single machine with a local ext 3 partition would suffice.

The ping-pong test shows whether a cluster filesystem is suitable for CTDB. The ping_pong.c code [15] creates a program that tests to see whether a clustering filesystem supports coherent byte-range locking. At the same time, it measures the locking performance, ascertains the integrity of simultaneous write access, and gauges memory mapping (mmap()) performance. Details of the ping-pong test are available from the Samba wiki [16].

The filesystem that performs best with CTDB in tests is IBM's proprietary GPFS [17]; CTDB and Samba Clustering owe much of their development to sponsoring by IBM. Red Hat's Global File System (GFS) Version 2 [18] also performed well. Watch out for CTDB packages in the next version of Fedora. Additionally, reports of deployment with the GNU Cluster File System (GlusterFS) [19] and Suns Lustre [20] are positive. The Oracle Cluster File System (OCFS2) [21] should be suitable when the Posix fcntl() locking implementation has been completed [22].