A bag o' tricks from the Perlmeister

Undercover Information

If you have been programming for decades, you've likely gathered a personal bag of tricks and best practices over the years – much like this treasure trove from the Perlmeister.

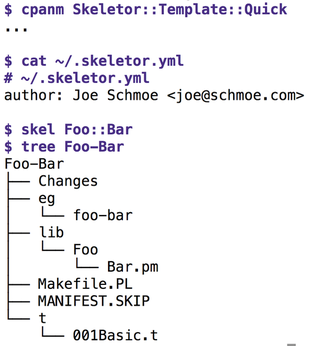

For each new Perl project – and I launch several every week – it is necessary to first prime the working environment. After all, you don't want a pile of spaghetti scripts lying around that nobody can maintain later. A number of template generators are available on CPAN. My attention was recently drawn to App::Skeletor, which uses template modules to adapt to local conditions. Without further ado, I wrote Skeletor::Template::Quick to adapt the original to my needs and uploaded the results to CPAN.

If you store the author info, as shown in Figure 1, in the ~/.skeletor.yml file in your home directory and, after installing the Template module from CPAN, run the skel Foo::Bar command, you can look forward to instantly having a handful of predefined files for a new CPAN distribution dumped into a new directory named Foo-Bar. Other recommended tools for this scaffolding work would be the built-in Perl tool h2xs or the CPAN Module::Starter module.

[...]

Buy this article as PDF

(incl. VAT)

Buy Linux Magazine

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Subscribe to our ADMIN Newsletters

Support Our Work

Linux Magazine content is made possible with support from readers like you. Please consider contributing when you’ve found an article to be beneficial.

News

-

Ubuntu Core 26 Offers Game-Changing Enterprise Features

Ubuntu Core 26 could be a game-changer for organizations looking for increased security and reliability.

-

AI Flooding the Linux Kernel Security Mailing List

AI is giving Linus Torvalds a headache, but not in the way you might think.

-

Top Priorities for Open Source Pros Seeking a New Job

Professional fulfillment tops the list, according to LPI report.

-

Container-Based Fedora Hummingbird Designed for Agent-First Builders

Fedora Hummingbird brings the same approach to the host OS as it does to containers to level up security.

-

Linux kernel Developers Considering a Kill Switch

With the rise of Linux vulnerabilities, the kernel developers are now considering adding a component that could help temporarily mitigate against them… in the form of a kill switch.

-

Fedora 44 Now Gaming Ready

The latest version of Fedora has been released with gaming support.

-

Manjaro 26.1 Preview Unveils New Features

The latest Manjaro 26.1 preview has been released with new desktop versions, a new kernel, and more.

-

Microsoft Issues Warning About Linux Vulnerability

The company behind Windows has released information about a flaw that affects millions of Linux systems.

-

Is AI Coming to Your Ubuntu Desktop?

According to the VP of Engineering at Canonical, AI could soon be added to the Ubuntu desktop distribution.

-

Framework Laptop 13 Pro Competes with the Best

Framework has released what might be considered the MacBook of Linux devices.