Be careful what you wish for

Artificial Intelligence

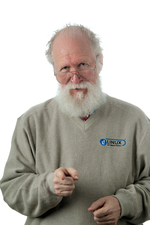

"maddog" ponders the rise of intelligent machines.

Recently, I read that Stephen Hawking, the world-famous physicist, had warned people that artificial intelligence (AI) could be very useful or could be the worst thing that ever happened to mankind. The article, which included Hawking's comments, went on to discuss the many things AI could do for us – from analyzing and extracting information from the Internet, to making quick judgments in deploying and firing military weapons, to taking over the world.

In the comments section of the article were people who either agreed with Stephen Hawking or (much more often) brushed off his comments by saying "who would create such a thing" or "only a supreme being could create something which is truly intelligent."

Those of you who have been reading my work for a while know that I am a great fan of Dr. Alan Turing, and those who know of Dr. Turing's work also know that his interest in computers stemmed largely from the desire to know how the human mind worked and the desire to create a machine that could think like a human. Dr. Turing's "test" for what constitutes artificial intelligence is still used today, 60 years after his death.

[...]

Buy this article as PDF

(incl. VAT)

Buy Linux Magazine

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Subscribe to our ADMIN Newsletters

Support Our Work

Linux Magazine content is made possible with support from readers like you. Please consider contributing when you’ve found an article to be beneficial.

News

-

Manjaro 26.0 Primary Desktop Environments Default to Wayland

If you want to stick with X.Org, you'll be limited to the desktop environments you can choose.

-

Mozilla Plans to AI-ify Firefox

With a new CEO in control, Mozilla is doubling down on a strategy of trust, all the while leaning into AI.

-

Gnome Says No to AI-Generated Extensions

If you're a developer wanting to create a new Gnome extension, you'd best set aside that AI code generator, because the extension team will have none of that.

-

Parrot OS Switches to KDE Plasma Desktop

Yet another distro is making the move to the KDE Plasma desktop.

-

TUXEDO Announces Gemini 17

TUXEDO Computers has released the fourth generation of its Gemini laptop with plenty of updates.

-

Two New Distros Adopt Enlightenment

MX Moksha and AV Linux 25 join ranks with Bodhi Linux and embrace the Enlightenment desktop.

-

Solus Linux 4.8 Removes Python 2

Solus Linux 4.8 has been released with the latest Linux kernel, updated desktops, and a key removal.

-

Zorin OS 18 Hits over a Million Downloads

If you doubt Linux isn't gaining popularity, you only have to look at Zorin OS's download numbers.

-

TUXEDO Computers Scraps Snapdragon X1E-Based Laptop

Due to issues with a Snapdragon CPU, TUXEDO Computers has cancelled its plans to release a laptop based on this elite hardware.

-

Debian Unleashes Debian Libre Live

Debian Libre Live keeps your machine free of proprietary software.