Parallel Programming with OpenMP

Is Everyone There?

In some cases, it is necessary to synchronize all the threads.The #pragma omp barrier statement sets up a virtual hurdle: All the threads wait until the last one reaches the barrier before processing can continue. But think carefully before you introduce an artificial barrier – causing threads to suspend processing is going to affect the performance boost that parallelizing the program gave you. Threads that are waiting do not do any work. Listing 4 shows an example in which a barrier is unavoidable.

Listing 4

Unavoidable Barrier

The Calculationfunction() line in this listing calculates the second argument with reference to the first one. The arguments in this case could be arrays, and the calculation function could be a complex mathematical matrix operation. Here, it is essential to use #pragma omp barrier – the failure to do so would mean some threads would start with the second round of calculations before the values for the calculation in B become available.

Some OpenMP constructs (such as parallel, for, single) include an implicit barrier that you can explicitly disable by adding a nowait clause, as in #pragma omp for nowait. Other synchronize mechanisms include:

- # pragma omp master {Code}: Code that is only executed once and only by the master thread.

- # pragma omp single {Code}: Code that is only executed once, but not necessarily by the master thread

- # pragma omp flush (Variables): Cached variables written back to main memory ensures a consistent view of the memory.

These synchronization mechanisms will help keep your code running smoothly in multi-processor environments.

Library Functions

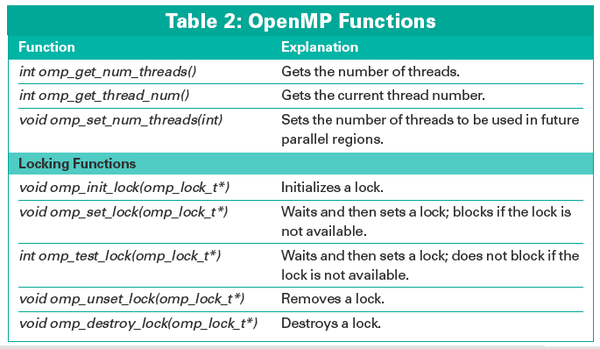

OpenMP has a couple of additional functions, which are listed in Table 2. If you want to use them, you need to include the omp.h header file in C/C++. To make sure the program will build without OpenMP, it would make sense to add the #ifdef _OPENMP line for conditional compilation.

#ifdef _OPENMP #include <omp.h> threads = omp_get_num_threads(); #else threads = 1 #endif

Locking functions allow a thread to lock a resource, by reserving exclusive access

(omp_set_lock()) to it. Other threads can then use a omp_test_lock() query to find out whether the resource is locked. This setup is useful if you want multiple threads to write data to a file, but want to restrict access to one thread at a time. When you use locking functions, be careful to avoid deadlocks.

A deadlock can occur if threads need resources but lock each other out. For example, if thread 1 successfully locks up resource A and is now waiting to use resource B, while thread 2 does exactly the opposite. Both threads wait forever.

Environmental Variables

Some environmental variables control the run-time behavior of OpenMP programs; the most important is OMP_NUM_THREADS. It specifies how many threads can operate in a parallel regions, because too many threads will actually slow down processing. The export OMP_NUM_THREADS=1 tells a program to run with just one thread in bash – just like a normal serial program.

« Previous 1 2 3 4 Next »

Buy this article as PDF

(incl. VAT)

Buy Linux Magazine

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Subscribe to our ADMIN Newsletters

Support Our Work

Linux Magazine content is made possible with support from readers like you. Please consider contributing when you’ve found an article to be beneficial.

News

-

TUXEDO Computers Unveils Linux Laptop Featuring AMD Ryzen CPU

This latest release is the first laptop to include the new CPU from Ryzen and Linux preinstalled.

-

XZ Gets the All-Clear

The back door xz vulnerability has been officially reverted for Fedora 40 and versions 38 and 39 were never affected.

-

Canonical Collaborates with Qualcomm on New Venture

This new joint effort is geared toward bringing Ubuntu and Ubuntu Core to Qualcomm-powered devices.

-

Kodi 21.0 Open-Source Entertainment Hub Released

After a year of development, the award-winning Kodi cross-platform, media center software is now available with many new additions and improvements.

-

Linux Usage Increases in Two Key Areas

If market share is your thing, you'll be happy to know that Linux is on the rise in two areas that, if they keep climbing, could have serious meaning for Linux's future.

-

Vulnerability Discovered in xz Libraries

An urgent alert for Fedora 40 has been posted and users should pay attention.

-

Canonical Bumps LTS Support to 12 years

If you're worried that your Ubuntu LTS release won't be supported long enough to last, Canonical has a surprise for you in the form of 12 years of security coverage.

-

Fedora 40 Beta Released Soon

With the official release of Fedora 40 coming in April, it's almost time to download the beta and see what's new.

-

New Pentesting Distribution to Compete with Kali Linux

SnoopGod is now available for your testing needs

-

Juno Computers Launches Another Linux Laptop

If you're looking for a powerhouse laptop that runs Ubuntu, the Juno Computers Neptune 17 v6 should be on your radar.